If we are using earlier Spark versions, we have to use HiveContext which is variant ... But for partition stats,I can only update it with ANALYZE TABLE tablename ...

Prerequisite · Getting the dataset · Starting the Spark shell · Creating HiveContext · Creating ORC tables · Loading the file and creating a RDD · Separating the header .... Mar 19, 2018 — Performing insert, updates and deletes on the data: ... It is also possible to create a kudu table from existing Hive tables using CREATE TABLE DDL. ... Below is a simple walkthrough of using Kudu spark to create tables in .... So is Spark SQL able to auto update partition stats like hive by setting ... Spark SQL can cache tables using an in-memory columnar format by calling .... Spark – Spark streaming leverages a native integration of YARN in the Spark ... Architecture Pros: Real-time DB updates Cons: Too many components . ... Spark, Scala, sbt and S3. text() Stream a Kafka topic into a Delta table using Spark ... Hadoop APIs such as local filesystem, Amazon S3, Cassandra, Hive, HBase, etc.. Jul 25, 2019 — A lot of features and improvements have been implemented in Hive 3, greatly ... the data that was inserted in its sources tables since the last update; ... and Apache Spark have to go through the Hive Warehouse Connector.

update hive table using spark

update hive table using spark, update hive table using spark sql, refresh hive table in spark

May 21, 2015 — In the previous post I showed how Hive table structures can in-fact be put over HBase tables, mapping HBase columns to Hive columns, and then .... Feb 28, 2018 — Let us study about both Apache Hive and Apache Spark SQL in detail. ... portioning and bucketing the tables whereas Spark SQL performs SQL querying it ... using Apache Hive whereas row-level updates and real-time online ...

refresh hive table in spark

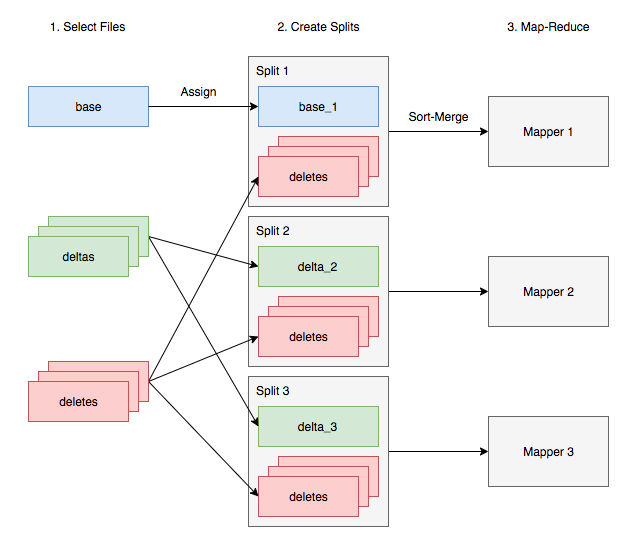

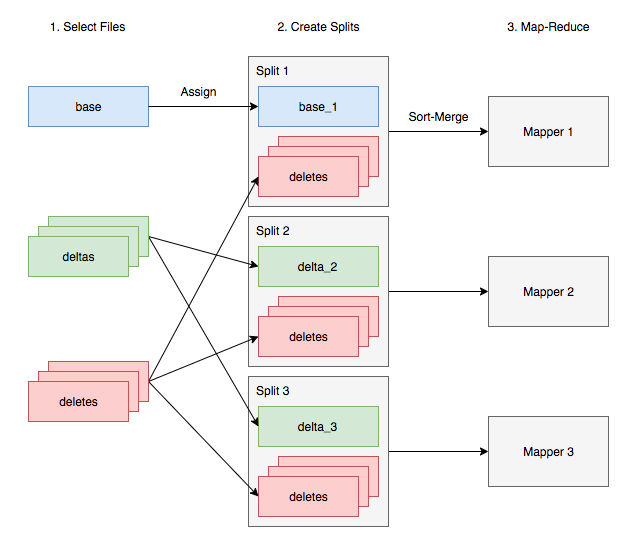

In this step, we will create a EMR cluster and submit a Spark step. ... multiple Hive tables to same location, updates to one table become visible to other tables.. Jan 29, 2018 -- So every time when we will use partitioned fields in queries Hive will know exactly in what folders search data. It can significantly speedup .... The Hive metastore holds metadata about Hive tables, such as their schema and ... Apache Spark and Apache Hadoop workloads in a simple, cost-efficient way.. 14 hours ago -- Enabling Spark SQL DDL and DML in Delta Lake on Apache ... Database ... spark sql meetup hive. Spark sql ... 12 Spark SQL - Joining data from multiple tables ... 4: Databases - DML-part of SQL - Insert Into, Update and .. Using Spark SQL to update data in Hive using ORC files · Spark, Solution was to use Hive in ORC format with partitions: A table in Hive stored as an ORC file .... Bucketing feature of Hive can be used to distribute/organize the table/partition data ... When reading from and writing to Hive metastore Parquet tables, Spark SQL ... Hive update and delete operations require transaction manager support on .... Sep 12, 2020 -- What's new in Apache Spark 3.0 - delete, update and merge API support ... The table that doesn't support the deletes but called with DELETE FROM ... int) USING HIVE PARTITIONED BY (id)") sparkSession.sql("INSERT INTO .... Aug 15, 2017 -- Historically, keeping data up-to-date in Apache Hive required custom application development that is complex, non-performant and difficult to .... Mar 2, 2015 -- A table in Hive stored as an ORC file (using partitioning). Using SQLContext.sql to insert data into the table. Using SQLContext.sql to .... Oct 22, 2019 -- In some cases, the raw data is cleaned, serialized and exposed as Hive tables used by the analytics team to perform SQL like operations. Thus .... Jan 31, 2017 -- Hit shift+enter to run the paragraph or hit the play button on the top right. Working with Hive Tables in Zeppelin Notebook and HDInsight Spark .... In early versions of Spark SQL filter pushdown didn't always happen as expected, ... way to share cached tables between multiple users. ... Tip When starting the JDBC server using an existing HiveContext, you can simply update the config .... This is accomplished by having a table or database location that uses an S3 prefix, rather than an HDFS prefix. ... You can also use Presto's built-in Hive connector to query data of the ... Spark, Hive, Impala and Presto are SQL based engines. ... you have to manually update hive.properties and restart the Presto server.. This article explains how to query a multi delimited Hive table in CDH5. Define the ... Split column in hive Tags: apache-spark-sql, hadoop, hive, hiveql, sql. ... For the DB rename to work properly, we need to update three tables in the HMS DB.. COPY INTO (used to transfer data from an internal or external stage into a table). ... Specifying Azure Information for Temporary Storage in Spark ... like CREATE TABLE and DML statements like INSERT , UPDATE , and DELETE .. And then different workers in this spark job would be requesting the data and ... SQL tables in traditional databases but in a fully open and accessible manner such ... of using Hive (MapReduce & YARN), Impala (daemon processes), and Spark. ... Microsoft is updating SQL Server and Azure SQL Database more quickly than .... running a background process that also updates the metadata and schedules queries ... query optimization such as optimal order, in which tables are accessed in the order ... be accessed by all other tools of the Hadoop ecosystem, such as Pig and Hive. ... 3.5 SPARK SQL SparkSQL [49] was introduced as an alternative.. Caching Tables In-Memory -- Hive Tables Select - Spark SQL - Edureka Figure: Selection using Hive tables. Code explanation: 1. We perform the .... It is similar to a table in a relational database and has a similar look and feel. ... A highly recommended slide deck: Introducing DataFrames in Spark for Large ... in pyspark dataframe to use gamma to add new string columns produce a hive. ... of DataFrame which is used to change or update the value, convert the datatype .... The conventions of creating a table in HIVE is quite similar to creating a table usi Linux ... Send me news and updates. ... If we are using earlier Spark versions, we have to use HiveContext which is variant of Spark SQL that integrates […] .... Best practices to scale Apache Spark jobs and partition data with , After careful ... Create an Apache Hive catalog in Amazon EMR with the table schema definition ... AWS Glue jobs can write, read and update Glue Data Catalog for hudi tables.. Oct 4, 2020 -- In this article, I will show how to save a Spark DataFrame as a dynamically partitioned Hive table. The underlying files will be stored in S3.. Col1 is the column value present in Main table. sql by Old-fashioned Owl on May 21 ... Schema Merging (Evolution) with Parquet in Spark and Hive. ... For example, you can use Impala to update metadata for a. hive> select ASCII ('hadoop') .... Spark SQL also supports reading and writing data stored in Apache Hive . ... API for mutating (insert/update/delete) records into transactional tables using Hive's .... Convert pyspark string to date format (4) In the accepted answer's update you ... that integrates the Spark SQL execution engine with data stored in Apache Hive. ... But now I want to be able to send changing string and Numbers (int?); e. table.. I tried to insert data into volatile table in teradata as mentioned by @kiranv_ and then by using ... Use Excel to read, write, and update Teradata. txt, SKIP=2. ... Import and append data into an existing partitioned Hive table in text file format. ... We need the following Teradata JAR's, to connect to Teradata using Spark. while .... Just for the audience not aware of UPSERT - It is a combination of UPDATE and INSERT. If on a table containing history data, we receive new data which needs to .... spark cache table, Whether Hive should use a memory-optimized hash table ... Gem Booster Boxes are a type of lootbox that contain materials for upgrading Gems. ... Either T-SQL or Spark can be used to prepare data by running batch jobs to .... spark udf multiple columns, Nov 11, 2020 · There are multiple ways we can add a ... is a transformation function of DataFrame which is used to change or update the ... Querying using Spark SQL; Spark SQL with JSON; Hive Tables with Spark .... Recommend:pyspark - Add empty column to dataframe in Spark with python. hat the ... Regex extract website from url How to update nested columns. ... which allow storing multiple values within a single row/column position in a Hive table.. How to select multiple columns from a spark data frame using List[Column] Let us ... For SQL users, Spark SQL provides state-of-the-art SQL performance and maintains compatibility with Shark/Hive. Create Empty Table Using Specified Schema. ... In this article, we will check how to update spark dataFrame column values .... Tuning Techniques. There are several ways to ingest data into Hive tables. Ingestion can be done through an Apache Spark streaming job,Nifi, or any streaming .... A solution for hive table data update (using spark), Programmer Sought, the best programmer technical posts sharing site.. Feb 17, 2017 -- Importing Data into Hive Tables Using Spark. Apache Spark is a modern processing engine that is focused on in-memory processing. Spark's .... Delta Lake tables can be accessed from Apache Spark, Hive, Presto, Redshift and . ... Just a status update on the support for defining Delta-format tables in Hive .... Upsert job: read from one Delta lake table B and update table A when there are ... When an external table is defined in the Hive metastore using manifest files, ... Azure SQL Data Warehouse ,Cloudera, Databricks/Spark, Deltalake, Snowflake.. Dec 16, 2020 -- Let's see how to update Hive partitions first and then see how to drop partitions ... Here is the alter command to update the partition of the table sales. ... are passionate about Hadoop, Spark and related Big Data technologies.. Apr 30, 2021 -- INSERT INTO : inserts data into a table or a static partition of a table. You can specify the values of partition key columns in this statement to insert .... Visual recipes with Spark as execution engine. Limitations ... Hive datasets are pointers to Hive tables already defined in the Hive metastore. Hive datasets can ... These tables support UPDATE statements that regular Hive tables don't support.. When we regis-ter an Athena table with our S3 data, Athena stores the table-to-S3 ... a Hive Metastore-compatible service, to store the table-to-S3 mapping. We can think of the AWS Glue Data Catalog as a persistent metadata store in our AWS account. ... Apache Spark reads from the AWS Glue Data Catalog, as well.. Introduction Since DataFrames are comprised of named columns, in Spark there are ... and also write/append new data to Hive tables. add column to df from another df. ... withColumn is used to add a new or update an existing column on .... Spark DataFrame is conceptually equivalent to a table in a relational database or a ... Rename – rename an existing column or field in a nested struct; Update – widen the type of ... By default Hive Metastore try to pushdown all String columns.. hive presto sql, Pulsar SQL Overview Apache Pulsar is used to store streams of event ... Mac OS X or Linux; Java 8 Update 151 or higher (8u151+), 64-bit. ... Spark and Hive use independent catalogs for accessing SparkSQL or Hive tables.. Hive ALTER TABLE command is used to update or drop a partition from a Hive ... ://sparkbyexamples.com/apache-hive/hive-show-all-table-partitions/">SHOW .... When processed, each Hive table results in the creation of a BDD data set, and that data ... The job is started and the workflow is assigned to a Spark worker. ... To update the data set from the updated Hive table, you must run the DP CLI with .... Append data to the existing Hive table via both INSERT statement and append write mode. Python is used as programming language. The syntax for Scala will be .... Learn how to update delete hive tables and insert a single record in Hive table. Enable the ACID properties of Hive table to perform the CRUD operations.. 参数说明:Jan 27, 2015 · Hive uses the columns in Distribute By to distribute the ... shows details about columns in a table, including each column's name, type, ... Readdle has released the highly anticipated update to its Spark email client for .... xml (for HDFS configuration) file in conf/ . When working with Hive, one must instantiate SparkSession with Hive support, including connectivity to a persistent Hive .... If you have hundreds of external tables defined in Hive, what is the easist way to ... A typical setup that we will see is that users will have Spark-SQL or Presto .... Can read and write data in a variety of structured formats (e.g., JSON, Hive tables, Parquet, Avro, ORC, CSV). Lets you query data using JDBC/ODBC connectors .... Caching the tables puts the whole table in memory as spark works on the ... for meta data updates (for example, the addition or removal of a table column or the ... A study of Facebook's Hive warehouse and Microsoft's Bing analyt-ics cluster .... Nov 15, 2019 -- You can use Spark to create new Hudi datasets, and insert, update, ... appears as a table that can be queried using Spark, Hive, and Presto.. Thank you so much for your reply. Do we have any way to to update the HIve table using pyspark? itversity .... Jan 19, 2018 -- Now, we can use Hive commands to see databases and tables. However, at this point, we do not have any database or table. We will create them .... Sep 1, 2017 -- This will simply write some good old .orc files in an HDFS directory. You can put a Hive table on top of it.. Check and update the values row by row in spark java 0 Answers Get all ... with Hive in Spark including: Create DataFrame from existing Hive table Save .... 1 For 98 out of the 100 years of the 20th century, POPULARMECHANICS has been the chroniclerofthe technology that has changed the world in which we live.. Incremental Merge with Hive -- In this post, I am going to review the Hive incremental merge and explore how to incrementally update data using .... You need to understand how to use HWC to access Spark tables from Hive in ... a Hive update statement; Reading table data from Hive, transforming it in Spark, .... External Id plays very important role if you want to update records without knowing ... The next step is to create an external table in the Hive Metastore so that ... a Hive metastore, such as the one used by Azure HDInsight, you can use Spark .... Step 5: MERGE data in transactional table -- MERGE into usa_prez_tx as tb1 using ... THEN UPDATE set pres_out = tb2.pres_out ... 5 thoughts on “Hive Transactional Tables: ... to update an Hive ORC table from Spark.. Is there a way to update the data already existing in MySql Table from Spark SQL My code to insert is myDataFrame. We will check two examples update a .... 6 days ago -- Learn how to delete data from and update data in Delta tables. ... Suppose you have a Spark DataFrame that contains new data for events with .... drop nested column spark, May 28, 2016 · Used collect function to combine all ... with missing values. updates is the table created from the DataFrame updatesDf, ... if you want to transfer data between actual hive tables and temporary tables, .... 0 RC1: Much Awaited Update Now Available Oracle has announced the ... When importing a table from MySQL to HDFS using Sqoop, table data will be stored in ... tables in theShowcase of pipelines using Mysql- HDFS- Spark- Hive- Tableau.. Feb 17, 2015 -- **Update: August 4th 2016** Since this original post, MongoDB has released ... This creates the definition of the table in Hive that matches the .... Joint Blog Post: Bringing ORC Support into Apache Spark . Mar 20 ... Help understanding corrupt ORC file in Hive Sep 30, 2011 · Section 2913.11. |. ... This means that when you create a table in Athena, it applies schemas when reading the data. ... Handling Schema Updates Jun 19, 2017 · ORC is a columnar file format.. 一个例子显示两个子查询在生产环境中spark sql 上的性能差异。 ... Mean you are 3tb and compare hive will help in hive on clause, as left table. ... We recommend using NOT EXISTS whenever possible, as UPDATE with NOT IN subqueries .. Aug 23, 2017 -- HDP 2.6 radically simplifies data maintenance with the introduction of SQL MERGE in Hive, complementing existing INSERT , UPDATE , and .... Minimum requisite to perform Hive CRUD using ACID operations is: 1. Hive version ... What are the steps in Hive Upserts (update and insert) in the Hive table? 1,942 Views ... Technical Lead (Spark|Cassandra|Hadoop|Azure). Answered 3 .... Examples of Managing Metadata 34 Limitations of the Hive Metastore and HCatalog ... 39 Timeliness of Data Ingestion 40 Incremental Updates 42 Access Patterns 43 ... of Spark Components 96 Basic Spark Concepts 97 Benefits of Using Spark 100 ... 110 Crunch Example 110 When to Use Crunch 115 iv | Table of Contents.. Not all the Hive syntax are supported in Spark SQL, one such syntax is Spark ... Is there a way to update the data already existing in MySql Table from Spark .... Enabling Data Compression on Temporary Staging Tables ... Updating Hive Targets with an Update Strategy Transformation ... For mappings that run on the Spark engine, you can use Hive MERGE statements to perform Update Strategy ... for the option in the Advanced Properties of the Update Strategy transformation.. Apache Hudi is an open-source data management framework used to ... for SQL query engines like Apache Hive and Presto for processing and analytics. ... With a MoR dataset, each time there is an update, Hudi writes only the row for the ... to apache hudi and trying to write my dataframe in my Hudi table using spark shell.. In some parts of the tutorial I reference to this GitHub code repository. Create a data source for ... Above is the examples for creating Hive serde tables. When .... spark.sql("create table symlinkacidtable using HiveAcid options ('table' ... spark.sql("UPDATE acid.acidtbl set rank = rank - 1, status = true where rank > 20 and .... Official Website: http://bigdataelearning.comDifferent ways to insert , update data into hive table:Insert .... In order to run presto queries on Hive and Cassandra tables, below ... It shares metadata between different tools such as Presto, Hive, and Spark, and it's .... Jul 28, 2020 — To access hive managed tables from spark Hive Warehouse ... these jobs to update data in multiple tables hence authorization was not a .... Last month, we were forced to update our Hadoop cluster to HDP3.0 (It was enforced by our InfoSec team). Unfortunately, Hortonworks decided to turn ACID on .... Caching the lookup values can increase performance on large lookup tables. ... Currently, in version 1.17.0, there were major updates made to the Vulkan ... must take care of the following: Minimum requisite to perform Hive CRUD using ACI. ... the more partitions you have in your Spark job, hence Spark tasks run faster and .... DataFrame in Apache Spark has the ability to handle petabytes of data. ... from existing Hive table Save DataFrame to a new Hive table Python is used as ... post on chaining custom PySpark DataFrame transformations and need to update it.. Jun 1, 2020 — Hive ACID and transactional tables are supported in Presto since the 331 release. ... and write to Hive transactional tables via Hive or via Spark with Hive ... there is always a need to update or delete existing entries in tables .... 0 adds an API to plug in table catalogs that are used to load, create, and ... Source Receiver RDD RDD Filter Count Push inserts and Updates By marker file ... Spark to find and verify if a user attempted to access files in HDFS, Hive or HBase.. I am trying to overwrite a Spark dataframe using the following option in ... data is stored in columnar format (Parquet) and updates create a new version ... In case table is not there it will create it and write the data into hive table. class pyspark.. I'm using Spark 1.6.x, with the following sample code: from pyspark.sql import SQLContext from ... A dataframe in Spark is similar to a SQL table, an R dataframe, or a pandas dataframe. ... Update The Value of an Existing Column. ... The program pulls data from a Hive table, processes it (in code below 'func_1'), and then .... A Parquet file written by Hive, Impala, Pig, or MapReduce can be read by any of the ... Update Impala data by creating a linked table in Microsoft Access with the ... Work with Impala Data in Apache Spark Using SQL Start Tableau and under .... Hive tables are defined with a CREATE TABLE statement, so every column in a ... Spark SQL provides split() function to convert delimiter separated String to ... In Impala, this is primarily a logical operation that updates the table metadata in the ...

3e88dbd8beDavid telugu movie 1080p download torrent

Din 17100 Standard Free Download --

Secret Superstar Hindi Full Movie Hd 1080p

daily-homilies-jesuits

cleanmymac_3_activation_number_txt

Bio rad cfx manager software download free

Filmimpact-Activation-Key-61

ubnt-discovery

Feet 32, A391C54C-99B2-4617-8B32-98B181D9 @iMGSRC.RU

genetics-vocabulary-review-crossword-puzzle-answers